News

Zafran Team

Zafran Announces Strategic Investment from Amex Ventures

February 24, 2026

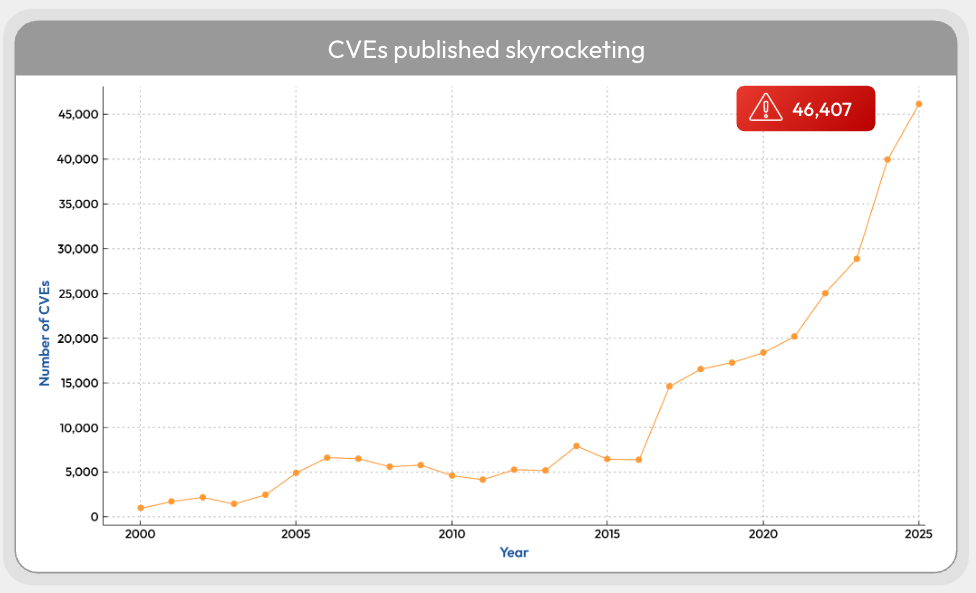

The stream of disclosed vulnerabilities is no longer simply rising. It is beginning to overwhelm the systems built to process it. In 2025 alone, 46,407 CVEs were published, up from 40,009 in 2024, which works out to an average of 127 new CVEs every day. That burden on defenders is well understood: every new disclosure adds another item to an already impossible triage queue.

But the strain is no longer limited to security teams. It is now hitting the institutions responsible for turning raw CVE records into usable intelligence. As federal vulnerability programs scale back or rethink enrichment in response to record CVE growth, the ecosystem risks losing the context defenders depend on to decide what actually matters.

And this pressure is only going to intensify. New AI systems such as Anthropic’s Mythos are already demonstrating the ability to find previously undiscovered flaws at scale and far faster than traditional research workflows. This means that the volume of CVEs (and the demand for timely, high-quality enrichment) is likely to rise even further.

Last week, NIST announced that the National Vulnerability Database will no longer automatically enrich every CVE it ingests from the CVE List. Effective immediately, NVD analysts will focus only on three categories of vulnerabilities: CVEs appearing in CISA’s Known Exploited Vulnerabilities (KEV) catalog, CVEs affecting software used within the federal government, and CVEs for critical software as defined by Executive Order 14028.

CVEs outside those categories will still appear in NVD, but they may be labeled ‘Lowest Priority’ and ‘not scheduled for immediate enrichment. The impact for vulnerability managers might be significant: many CVEs may remain without NVD-issued CPE applicability data, CWE mappings, and NIST-generated severity analysis.

NIST has framed this retrenchment as a capacity problem, not a policy preference: by its own account, CVE submissions rose 263% between 2020 and 2025. Even before any Mythos-era acceleration, the agency was already losing the race. In 2025, NIST says it enriched a record of “nearly 42,000 CVEs”, yet failed to clear the backlog that began building in early 2024. The live NVD dashboard now shows thousands of records still sitting in “Awaiting Enrichment” and “Undergoing Enrichment,” alongside more than 100,000 CVEs marked “Not Scheduled”.

At the same time, the resource picture is worsening: NIST’s staffing is down by roughly 705 employees since January 2025, and Trump’s administration FY2027 budget proposes deep cuts to the agency overall. In response, NIST has tried to outsource parts of enrichment to the ecosystem through CVMAP and to pursue a broader industry-government consortium. But those efforts have not solved the throughput problem.

In the short term, not necessarily. NIST's own categories, including “software used by federal agencies” and “critical software” are still broad enough and unenriched CVE IDs might remain limited to niche business or consumer-facing applications, plugins or tools with limited privilege and limited blast radius, and software used only for research or testing. Additionally, some of the enrichment might be produced by the reporting vendor (the CNA) itself and, as NIST also explicitly says itself, it will no longer provide a separate NIST severity score when the CNA already supplied one.

However, in the longer term, NIST’s decision sends a strong signal that a further acceleration in CVE discovery, pushed forward by a growing adoption of highly performant AI tools, might lead the whole CVE standard to become unstable.

For organizations running scanner-first vulnerability management programs, this creates an operational problem:

If public vulnerability enrichment can no longer be assumed at scale, defenders will need to adapt their workflows accordingly.

The real lesson in NIST’s decision is not simply that the vulnerability pipeline is growing too fast. It is that the ecosystem built around CVEs is starting to break under that growth. For years, defenders have treated public enrichment as a stable layer of security infrastructure: CVEs would be published, NVD would add the context, scanners would ingest it, and prioritization would follow. That assumption is no longer safe. As disclosure volumes continue to rise - and AI-driven discovery accelerates them further - the organizations that will keep up are not the ones waiting for vulnerability data to arrive fully normalized, but the ones building their own context from asset intelligence, exploitability signals, vendor data, threat intelligence and SBOM analysis. The future of exposure management will depend less on knowing that a CVE exists and more on knowing whether it creates real exposure in your environment.

Traditional vulnerability management must change. So many are drowning in detections, and still lack insights. The time-to-exploit window sits at 5 days. Implementing a Continuous Threat Exposure Management (CTEM) program is the path forward. Moving from vulnerability management to CTEM doesn't have to be complicated. This guide outlines steps you can take to begin, continue, or refine your CTEM journey.