Blog

Molly Small

Introducing the Zafran Zero Day Agent: An Autonomous Workflow for the Post-Mythos Era

May 7, 2026

A single governed interface that enables security teams transform their operating model to embrace AI agents — driving insights and delivering actions across the security stack.

For years, AI in security has meant chat interfaces, assistant sidebars, enrichment layers, and summarizers. Useful, but bounded. The model sat behind a text box. A human did the real work.

That era is ending. The same transformation that happened with coding agents is now going to happen with cybersecurity. Recent launches, including Anthropic's Claude Code Security, signal something fundamentally different: AI systems that can investigate codebases, validate findings, and propose concrete action in real workflows. Not just answering questions about security. Doing security.

And this isn't limited to application security. The same shift is coming to vulnerability management, detection engineering, cloud security, SOC operations, and compliance. Every security function that today depends on a practitioner manually stitching together data from multiple tools is a candidate for agent-driven transformation.

The question is no longer whether AI will participate in security operations. It's what architecture will govern it and enable the agents to operate at scale.

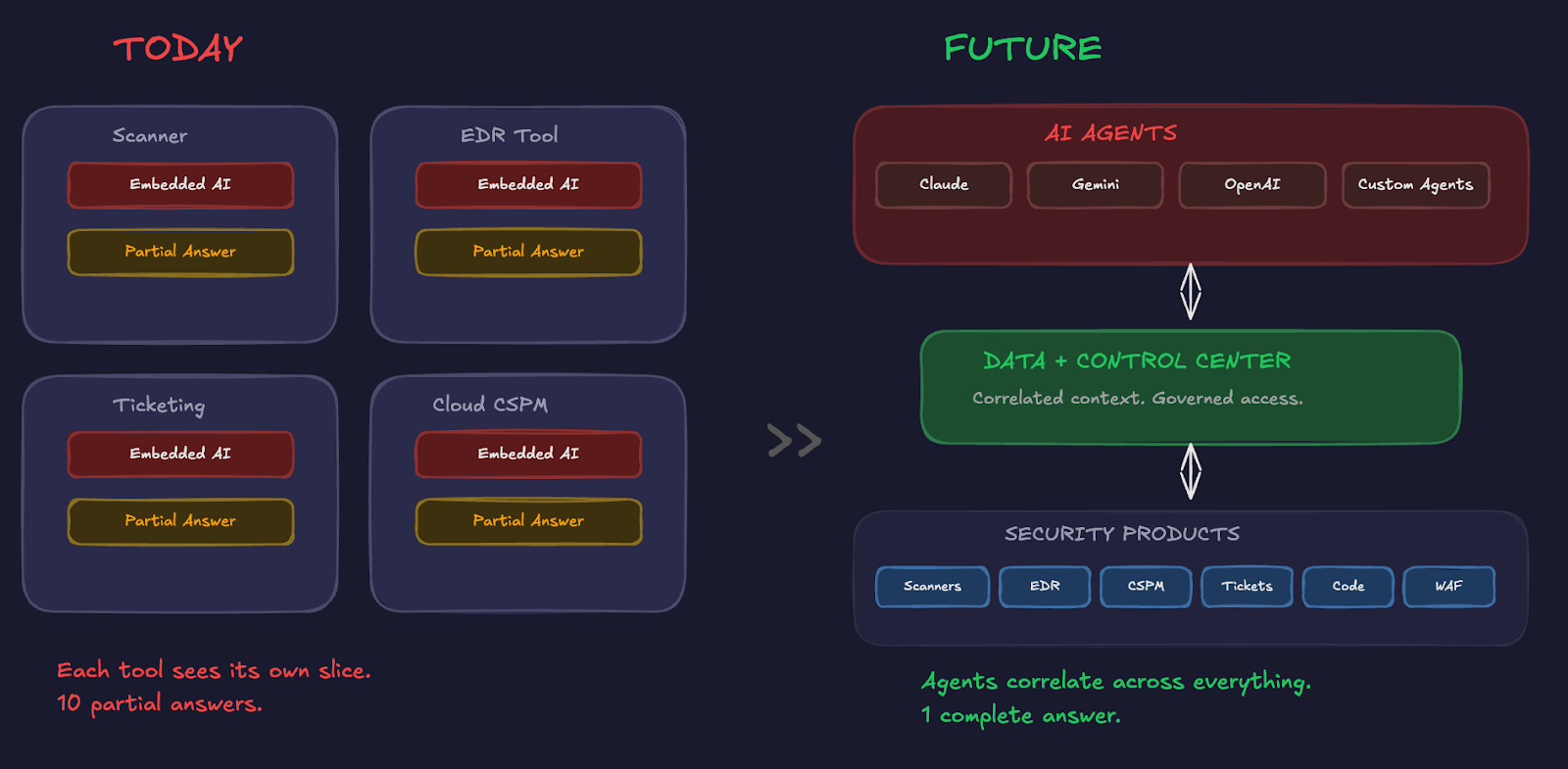

Here's the thesis: the future of security operations is not a collection of isolated AI assistants, each embedded inside a different vendor's UI, each seeing a partial slice of reality, each acting only within its own silo.

The future is a distributed environment where every security practitioner (vulnerability management analysts, detection engineers, security architects, cloud security engineers, SOC analysts) effectively gains a team of AI agents working on their behalf.

Those agents won't live neatly inside one product. They'll operate across scanners, EDR platforms, asset inventories, ticketing systems, code repositories, messaging tools, patching systems, and mitigation platforms. They'll need to correlate across all of them.

Consider the contrast. Today, each SaaS platform ships its own AI assistant. Each assistant is trapped in its own product boundary. Each sees only what that product knows. That model produces ten partial answers instead of one complete one.

In the agent-driven model, the center of gravity shifts. Security SaaS products remain important (they generate data, they execute actions) but they stop being the hub. Data, context, and control become the center.

As soon as agents operate across the environment rather than within a single product, two hard requirements emerge simultaneously.

A large enterprise might have 100,000+ assets, hundreds of millions of vulnerability findings, and 70+ security tools. Even a million-token context window can't hold all of that. Understanding actual exposure (what's internet-reachable, critically vulnerable, and not already mitigated) requires correlating data that no single tool owns.

Agents don't perform well when they're consuming raw, siloed tool output. They need normalized, correlated, deduplicated context. Without it, they hallucinate, produce shallow analysis, or give answers that sound right but miss the compensating control that changes the entire risk picture.

An agent that can read data is useful. An agent that can take action (open a ticket, push a firewall rule, create a pull request) is powerful. Multiple agents doing this across tools, without coordination, create a new blast radius that most organizations aren't prepared for.

CISOs need to know: What data can each agent access? What is each agent allowed to do? Who approved what? What was actually executed? How are actions monitored and audited?

The AI era doesn't just create a data challenge. It creates a governance challenge

Making agentic security real at enterprise scale isn't about building one better agent. It's about building the infrastructure that allows many agents to operate safely and effectively.

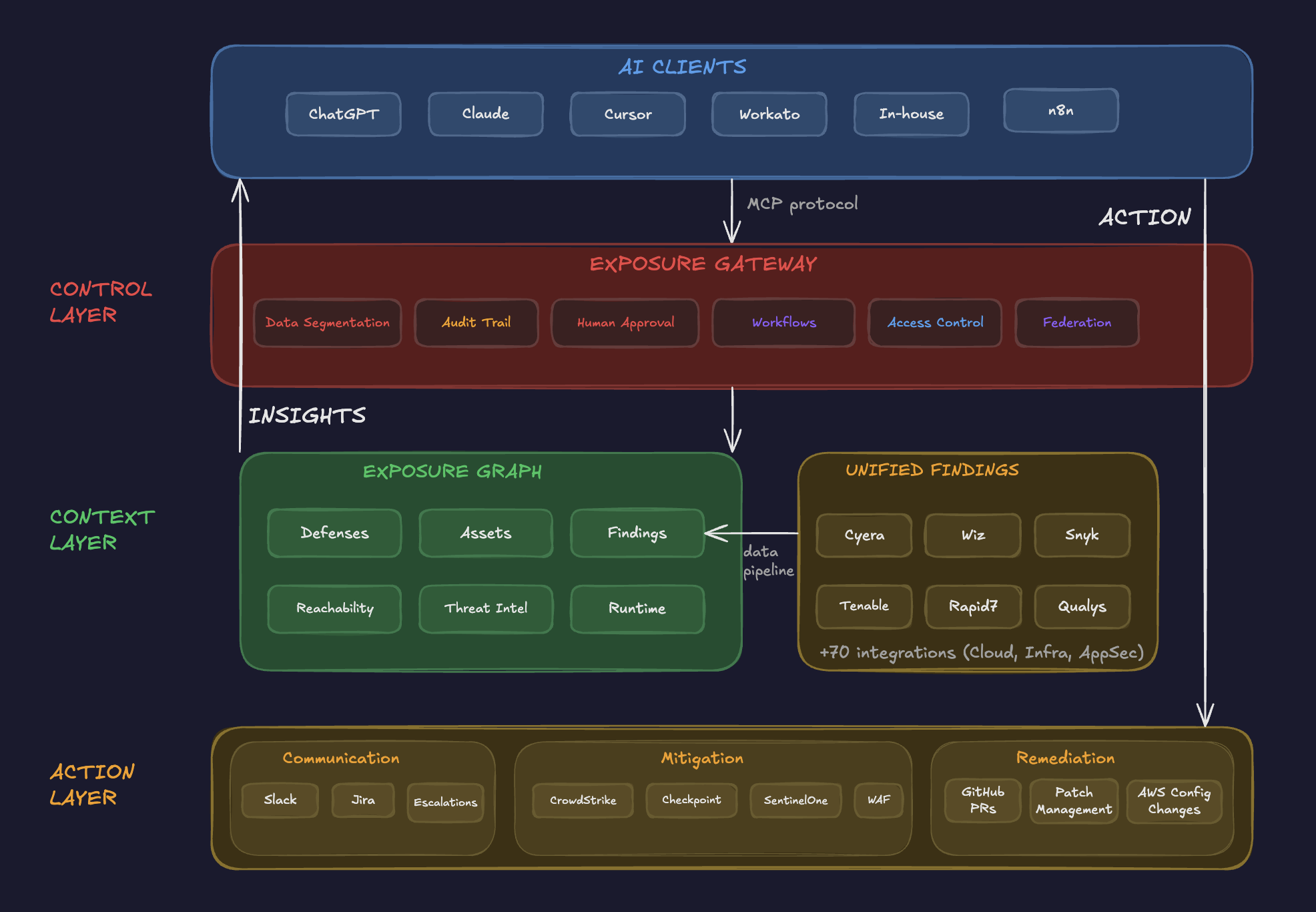

That infrastructure requires three layers: a context layer that gives agents rich, normalized security context; a control layer that governs what agents can see and do; and an action layer that provides governed paths from insight to response.

In a recent report, "AI Agent Management Platforms Are Required for Agentic Cybersecurity Success," Gartner reinforced the urgency of this architectural shift. This is the architecture Zafran has built. And the Exposure Gateway, the AI agent management platform at its center, is the control plane that makes it work.

This is the core of what we’re discussing today. The Exposure Gateway is the governance and access-control plane between every AI client and the Exposure Graph, and between every agent and the actions it can take.

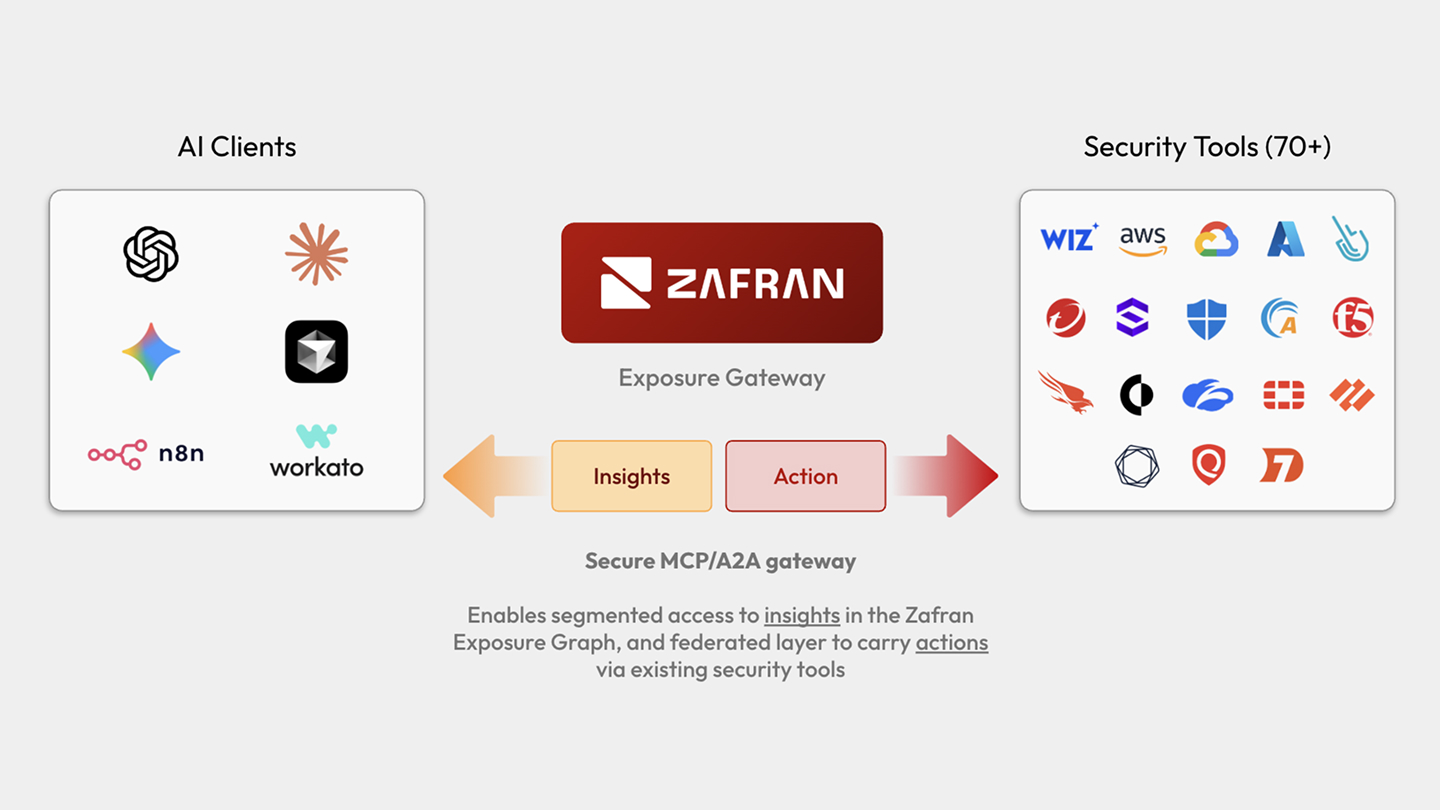

Through the MCP protocol (or through the A2A protocol, as it matures), any AI client (Claude, ChatGPT, Cursor, Workato, n8n, or your own in-house agents) connects to Zafran through a single governed interface, acting as an AI firewall between agents and your security stack. But not every agent sees the same thing or can do the same things.

The Gateway enforces:

In the AI era, security teams need governance for agents, not just for humans.

Agents need a normalized, correlated, AI-ready data plane for exposure intelligence. Zafran already built the hard part.

Zafran’s Exposure Graph is a canonical context layer that sits across your entire security stack. It ingests data from vulnerability scanners (Tenable, Rapid7, Qualys, Wiz, Snyk, Cyera and more), correlates it with asset inventories, maps compensating controls and defenses, and models reachability and blast radius.

The result is a unified, deduplicated view of every asset, finding, control gap, and exposure path in the environment. A question like "What's internet-exposed, critically vulnerable, and already mitigated by compensating controls?" requires joining multiple data sources. No single vendor can answer it deterministically. And no agent should have to build that graph on its own.

The agent shouldn't have to build the graph. The graph should already exist.

.png)

Today, most security tools don't have production-ready MCP servers. Many have none at all. Even those with APIs often lack the structure agents need to take reliable action.

A useful security agent doesn't stop at analysis. It needs a governed path to act. The Zafran architecture supports three categories of action, all policy-bound, observable, and reversible where possible.

This makes Zafran the workflow engine for agentic remediation, and the audit and control point for everything agents do in the environment.

Here's a concrete workflow showing the full architecture in action:

This architectural pattern will matter across the entire market, not just for one product category. Every enterprise will face this problem as agents multiply. Every security team building internal agents will run into it. Every vendor shipping agentic workflows will need to answer for it.

The winners won't be the agents with the flashiest UX. They'll be the platforms that provide trusted context and controlled execution. Agent quality will increasingly depend on the infrastructure around the agent, not just the model inside it.

We should be honest about what's still maturing. Data quality and normalization remain hard problems. Permissioning for agents is an immature discipline. Human trust will lag behind technical capability for a while. Tool ecosystems are still fragmented. Cost and compute efficiency will be a real constraint.

But the opportunities this architecture unlocks are transformative. Every security practitioner gains leverage through specialized agents. Exposure triage becomes continuous instead of episodic. Remediation gets faster and more adaptive. Cross-functional coordination improves because actions flow directly into operational systems (tickets, PRs, firewall rules) instead of sitting in dashboards waiting for a human to copy-paste.

Most importantly: organizations can let many agents operate without losing oversight. Security teams transform from manually executing work to supervising intelligent systems of execution. That's not an incremental improvement. It's a new operating model.

As AI becomes operational in security, the center of the architecture shifts. Not away from security products entirely (they still generate critical data and execute critical actions) but away from isolated interfaces, toward shared intelligence, shared control, and shared action pathways.

The Zafran Exposure Gateway is built for this moment. A single governed interface where every agent gets rich exposure context, scoped access, and auditable action paths. The control plane that lets security teams transform their security workflows, operationalizing AI agents across their organization without sacrificing the governance that makes enterprise security possible.

If every security professional is going to have agents, those agents need more than access. They need architecture.

Traditional vulnerability management must change. So many are drowning in detections, and still lack insights. The time-to-exploit window sits at 5 days. Implementing a Continuous Threat Exposure Management (CTEM) program is the path forward. Moving from vulnerability management to CTEM doesn't have to be complicated. This guide outlines steps you can take to begin, continue, or refine your CTEM journey.